聊聊神經網路的優化演演算法

優化演演算法主要用於調整神經網路中的超引數,使得訓練資料集上的損失函數儘可能小。其核心邏輯是通過計算損失函數對引數的梯度(導數)來確定引數更新方向。

SGD

Stochastic Gradient Descent(隨機梯度下降法):隨機梯度下降演演算法是一種改進的梯度下降方法,它在每次更新引數時,只隨機選擇一個樣本來計算梯度。這樣可以大大減少計算量,提高訓練速度。隨機梯度下降演演算法在訓練大規模資料集時非常有效。

其Python實現是

class SGD:

"""隨機梯度下降法(Stochastic Gradient Descent)"""

def __init__(self, lr=0.01):

self.lr = lr

# 更新超引數

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

引數lr表示學習率,引數params和grads是字典變數,儲存了權重引數(prams['W1'])與梯度(grads['W1']),update方法執行的是超引數的梯度更新。

使用這個SGD類,可以按如下虛擬碼進行神經網路的引數更新:

network = nn.layernet()

optimizer = SGD()

for i in range(10000):

x_batch, t_batch = get_batch(..)

# 獲取引數的梯度資訊

grads = network.gradient(x_batch, t_batch)

# 獲取引數

params = network.params

optimizer.update(params,grads)

Momentum

Momentum是"動量"的意思,是物理學的概念。其數學表示式如下:

這裡新出現的引數

class Momentum:

"""Momentum SGD"""

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

# 更新超引數

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] = self.momentum*self.v[key] - self.lr*grads[key]

params[key] += self.v[key]

AdaGrad

在神經網路中,學習率

AdaGrad會為引數的每個元素適當的(Adaptive)調整學習率,與此同時進行學習。其數學表示式如下:

其python實現如下:

class AdaGrad:

"""AdaGrad"""

def __init__(self, lr=0.01):

self.lr = lr

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

Adam

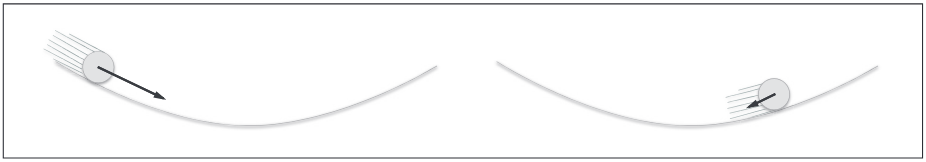

Momentum參照小球在碗中捲動的物理規則進行移動,AdaGrad為引數的每個元素適當的調整更新步伐。將兩者融合就是Adam方法的基本思路。

Adam演演算法的公式如下,流程比較複雜,簡單的理解就是其基本思路。

- 初始化:設 ( t = 0 ),初始化模型引數

,學習率

,以及超引數

。為每個引數

初始化一階矩估計

和二階矩估計

。

- 在第 ( t ) 步,計算目標函數

對引數

的梯度

。

- 更新一階矩估計:

。

- 更新二階矩估計:

。

- 校正一階矩估計和二階矩估計中的偏差:

。

- 計算自適應學習率:

。

- 使用自適應學習率更新模型引數:

。

- ( t = t + 1 ),重複步驟 2-7 直到收斂。

通過上述公式,Adam演演算法能夠自適應地調整每個引數的學習率,從而在訓練過程中加速收斂。

其Python實現:

class Adam:

"""Adam (http://arxiv.org/abs/1412.6980v8)"""

def __init__(self, lr=0.001, beta1=0.9, beta2=0.999):

self.lr = lr

self.beta1 = beta1

self.beta2 = beta2

self.iter = 0

self.m = None

self.v = None

def update(self, params, grads):

if self.m is None:

self.m, self.v = {}, {}

for key, val in params.items():

self.m[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.beta2**self.iter) / (1.0 - self.beta1**self.iter)

for key in params.keys():

self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key])

self.v[key] += (1 - self.beta2) * (grads[key]**2 - self.v[key])

params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7)