Bert-vits2-2.3-Final,Bert-vits2最終版一鍵整合包(復刻生化危機艾達王)

近日,Bert-vits2釋出了最新的版本2.3-final,意為最終版,修復了一些已知的bug,新增基於 WavLM 的 Discriminator(來源於 StyleTTS2),令人意外的是,因情感控制效果不佳,去除了 CLAP情感模型,換成了相對簡單的 BERT 融合語意方式。

事實上,經過2.2版本的測試,CLAP情感模型的效果還是不錯的,關於2.2版本,請移步:

Bert-vits2-v2.2新版本本地訓練推理整合包(原神八重神子英文模型miko)

更多情報請關注Bert-vits2官網:

https://github.com/fishaudio/Bert-VITS2/releases/tag/v2.3

本次我們基於最新版Bert-vits2-2.3來複刻生化危機經典角色艾達王(ada wong)的聲音。

Bert-vits2-2.3專案設定

首先克隆專案:

git clone https://github.com/v3ucn/Bert-vits2-V2.3.git

注意該專案fork自Bert-vits2的2.3分支,在其基礎上增加了素材切分和轉寫標註等功能,更易於使用。

隨後進入專案:

cd Bert-vits2-V2.3

安裝依賴:

pip3 install -r requirements.txt

隨後下載對應的模型,首先是bert模型:

https://openi.pcl.ac.cn/Stardust_minus/Bert-VITS2/modelmanage/show_model

放入到bert目錄:

E:\work\Bert-VITS2-2.3\bert>tree /f

Folder PATH listing for volume myssd

Volume serial number is 7CE3-15AE

E:.

│ bert_models.json

│

├───bert-base-japanese-v3

│ .gitattributes

│ config.json

│ README.md

│ tokenizer_config.json

│ vocab.txt

│

├───bert-large-japanese-v2

│ .gitattributes

│ config.json

│ README.md

│ tokenizer_config.json

│ vocab.txt

│

├───chinese-roberta-wwm-ext-large

│ .gitattributes

│ added_tokens.json

│ config.json

│ pytorch_model.bin

│ README.md

│ special_tokens_map.json

│ tokenizer.json

│ tokenizer_config.json

│ vocab.txt

│

├───deberta-v2-large-japanese

│ .gitattributes

│ config.json

│ pytorch_model.bin

│ README.md

│ special_tokens_map.json

│ tokenizer.json

│ tokenizer_config.json

│

├───deberta-v2-large-japanese-char-wwm

│ .gitattributes

│ config.json

│ pytorch_model.bin

│ README.md

│ special_tokens_map.json

│ tokenizer_config.json

│ vocab.txt

│

└───deberta-v3-large

.gitattributes

config.json

generator_config.json

pytorch_model.bin

README.md

spm.model

tokenizer_config.json

注意,其中每個子目錄中的pytorch_model.bin就是bert模型本體。

隨後還得下載clap模型,雖然推理已經把clap去掉了,同時下載wav2vec2-large-robust-12-ft-emotion-msp-dim模型,放入到專案的emotional目錄:

E:\work\Bert-VITS2-2.3\emotional>tree /f

Folder PATH listing for volume myssd

Volume serial number is 7CE3-15AE

E:.

├───clap-htsat-fused

│ .gitattributes

│ config.json

│ merges.txt

│ preprocessor_config.json

│ pytorch_model.bin

│ README.md

│ special_tokens_map.json

│ tokenizer.json

│ tokenizer_config.json

│ vocab.json

│

└───wav2vec2-large-robust-12-ft-emotion-msp-dim

.gitattributes

config.json

LICENSE

preprocessor_config.json

pytorch_model.bin

README.md

vocab.json

最後下載底模:

https://huggingface.co/OedoSoldier/Bert-VITS2-2.3

放入到角色的models目錄即可。

請注意這次2.3的底模是4個檔案。

Bert-vits2-2.3資料預處理

把艾達王的語音素材放入到Data/ada/raw目錄中,執行切分指令碼:

python3 audio_slicer.py

會切分成小片素材:

E:\work\Bert-VITS2-2.3\Data\ada\raw>tree /f

Folder PATH listing for volume myssd

Volume serial number is 7CE3-15AE

E:.

ada_0.wav

ada_1.wav

ada_10.wav

ada_11.wav

ada_12.wav

ada_13.wav

ada_14.wav

ada_15.wav

ada_16.wav

ada_17.wav

ada_18.wav

ada_19.wav

ada_2.wav

ada_20.wav

ada_21.wav

ada_22.wav

ada_23.wav

ada_24.wav

ada_25.wav

ada_26.wav

ada_3.wav

ada_4.wav

ada_5.wav

ada_6.wav

ada_7.wav

ada_8.wav

ada_9.wav

隨後執行轉寫和標註:

python3 short_audio_transcribe.py

程式返回:

E:\work\Bert-VITS2-2.3\venv\lib\site-packages\whisper\timing.py:58: NumbaDeprecationWarning: The 'nopython' keyword argument was not supplied to the 'numba.jit' decorator. The implicit default value for this argument is currently False, but it will be changed to True in Numba 0.59.0. See https://numba.readthedocs.io/en/stable/reference/deprecation.html#deprecation-of-object-mode-fall-back-behaviour-when-using-jit for details.

def backtrace(trace: np.ndarray):

Data/ada/raw

Detected language: en

I do. The kind you like.

Processed: 1/27

Detected language: en

Now where's the amber?

Processed: 2/27

Detected language: en

Leave the girl. She's lost no matter what.

Processed: 3/27

Detected language: en

You walk away now, and who knows?

Processed: 4/27

Detected language: en

Maybe you'll live to meet me again.

Processed: 5/27

Detected language: en

And I might get you that greeting you were looking for.

Processed: 6/27

Detected language: en

How about we continue this discussion another time?

Processed: 7/27

Detected language: en

Sorry, nothing yet.

Processed: 8/27

Detected language: en

But my little helper is creating

Processed: 9/27

Detected language: en

Quite the commotion.

Processed: 10/27

Detected language: en

Everything will work out just fine.

Processed: 11/27

Detected language: en

He's a good boy. Predictable.

Processed: 12/27

Detected language: en

The deal was, we get you out of here when you deliver the amber. No amber, no protection, Louise.

Processed: 13/27

Detected language: en

Nothing personal, Leon.

Processed: 14/27

Detected language: en

Louise and I had an arrangement.

Processed: 15/27

Detected language: en

Don't worry, I'll take good care of it.

Processed: 16/27

Detected language: en

Just one question.

Processed: 17/27

Detected language: en

What are you planning to do with this?

Processed: 18/27

Detected language: en

So, we're talking millions of casualties?

Processed: 19/27

Detected language: en

We're changing course. Now.

Processed: 20/27

Detected language: en

You can stop right there, Leon.

Processed: 21/27

Detected language: en

wouldn't make me use this.

Processed: 22/27

Detected language: en

Would you? You don't seem surprised.

Processed: 23/27

Detected language: en

Interesting.

Processed: 24/27

Detected language: en

Not a bad move

Processed: 25/27

Detected language: en

Very smooth. Ah, Leon.

Processed: 26/27

Detected language: en

You know I don't work and tell.

注意,這裡whiper會報一個警告,如果覺得不好看,可以修改timing.py第58行:

修改前

@numba.jit

def backtrace(trace: np.ndarray):

修改後

@numba.jit(nopython=True)

def backtrace(trace: np.ndarray):

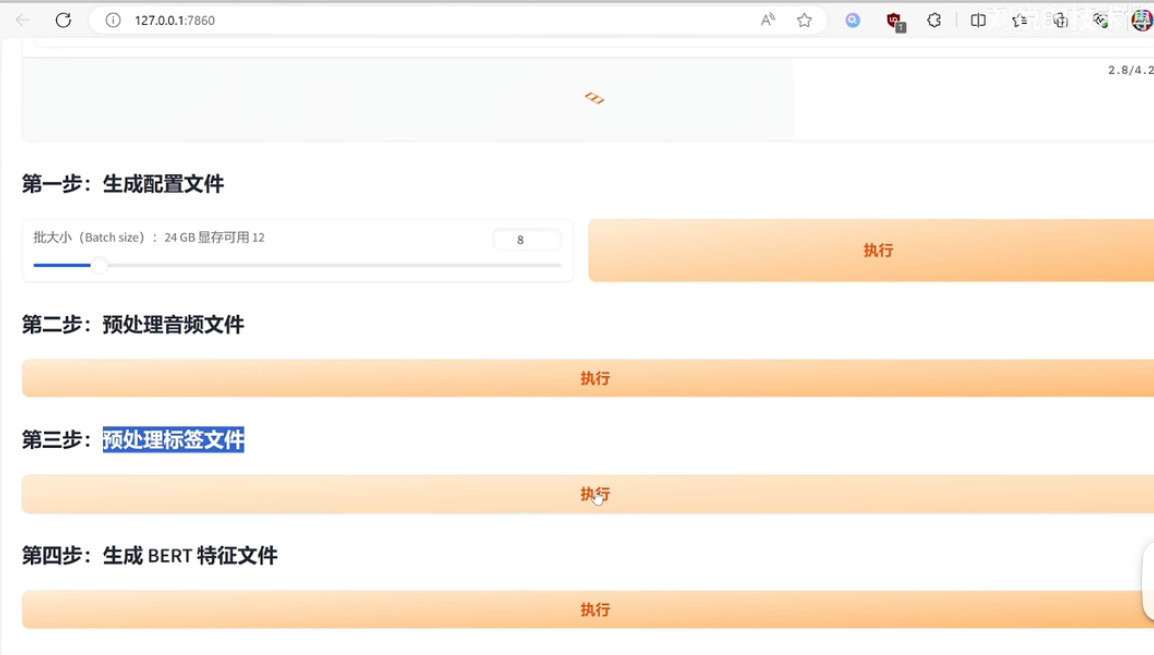

隨後,執行web預處理介面:

python3 webui_preprocess.py

隨後按照頁面提示操作即可:

至此,資料預處理就結束了。

Bert-vits2-2.3訓練和推理

在根目錄執行命令:

python3 train_ms.py

模型會在models目錄生成:

E:\work\Bert-VITS2-2.3\Data\ada\models>tree/f

Folder PATH listing for volume myssd

Volume serial number is 7CE3-15AE

E:.

G_150.pth

隨後開啟推理頁面進行推理即可:

python3 webui.py

新的推理頁面增加了使用輔助文字的語意來輔助生成對話(語言保持與主文字相同),即以提示詞prompt的形式來客製化化生成語音的風格。

但又不能使用使用指令式文字(如:開心),要使用帶有強烈情感的文字(如:我好快樂!!!)

這就導致生成的語音情感風格比較玄學:

因為你得不停地調整prompt來測試效果,不如之前地clap情感的audio prompt來的直觀,但客觀上講,通過bert語意文字引導的風格化情感語音還是有一定效果的。

結語

更新Bert-vits2基礎教學的同時,也學習到了很多東西,毫無疑問,Bert-vits2讓更多的人領略到了深度學習的魅力,它是一個極其優秀的人工智慧入門專案,興趣永遠是最好的老師,與各位共勉,最後奉上Bert-vits2-2.3-Final整合包:

整合包連結:https://pan.baidu.com/s/182LZCu5cyR3nH8EoTBLR-g?pwd=v3uc

與眾鄉親同饗。